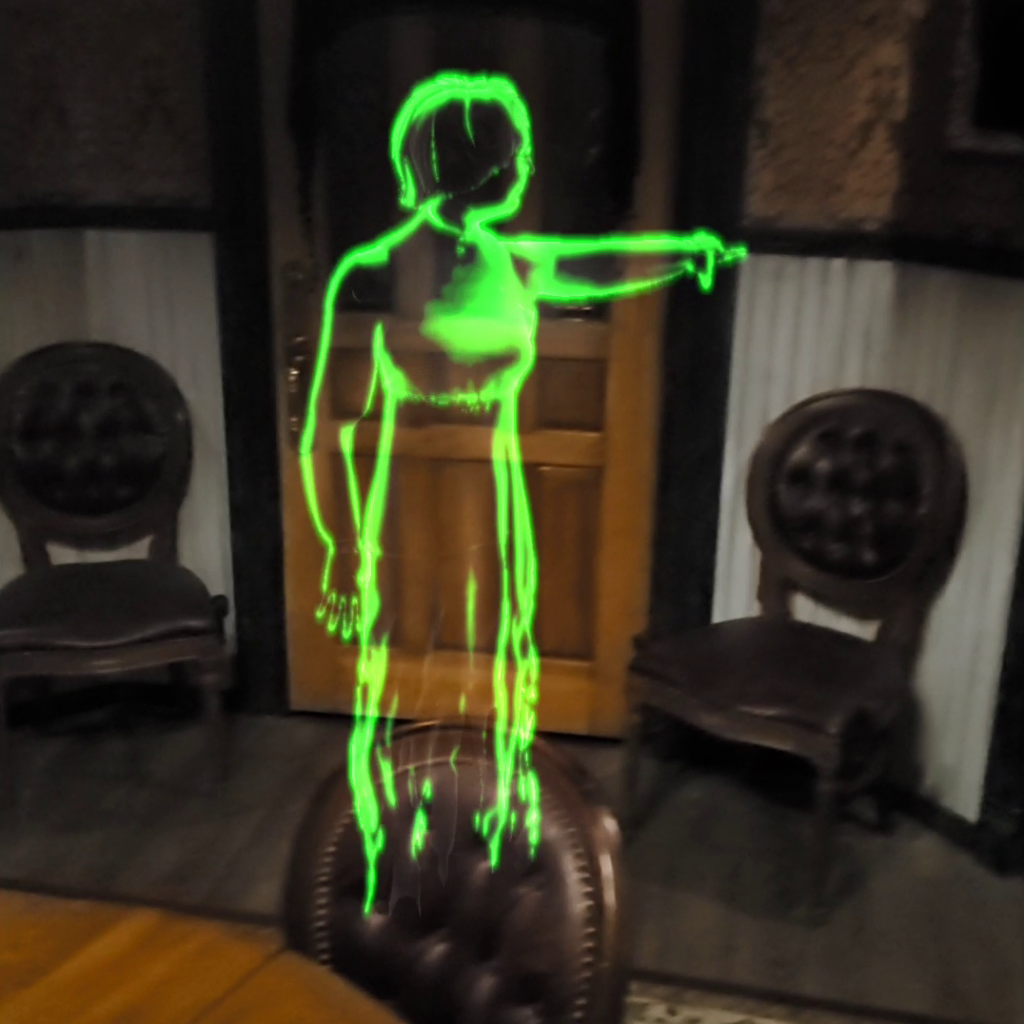

A VR Puzzle Game created by Rose City Games.

My role: environment art, 3D modeling, texturing, and realtime VFX

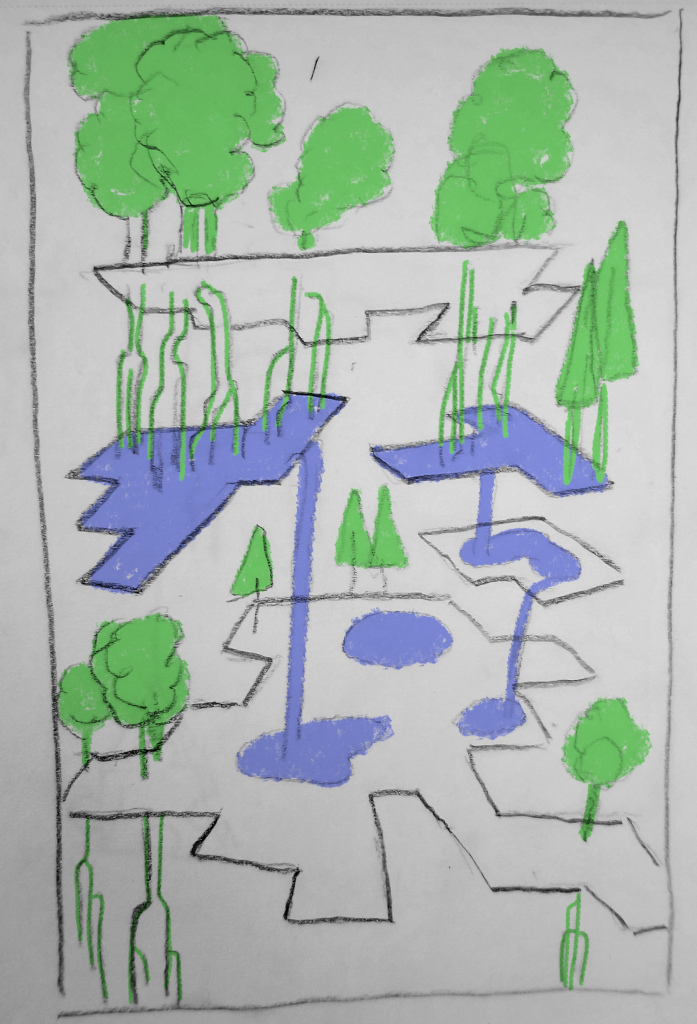

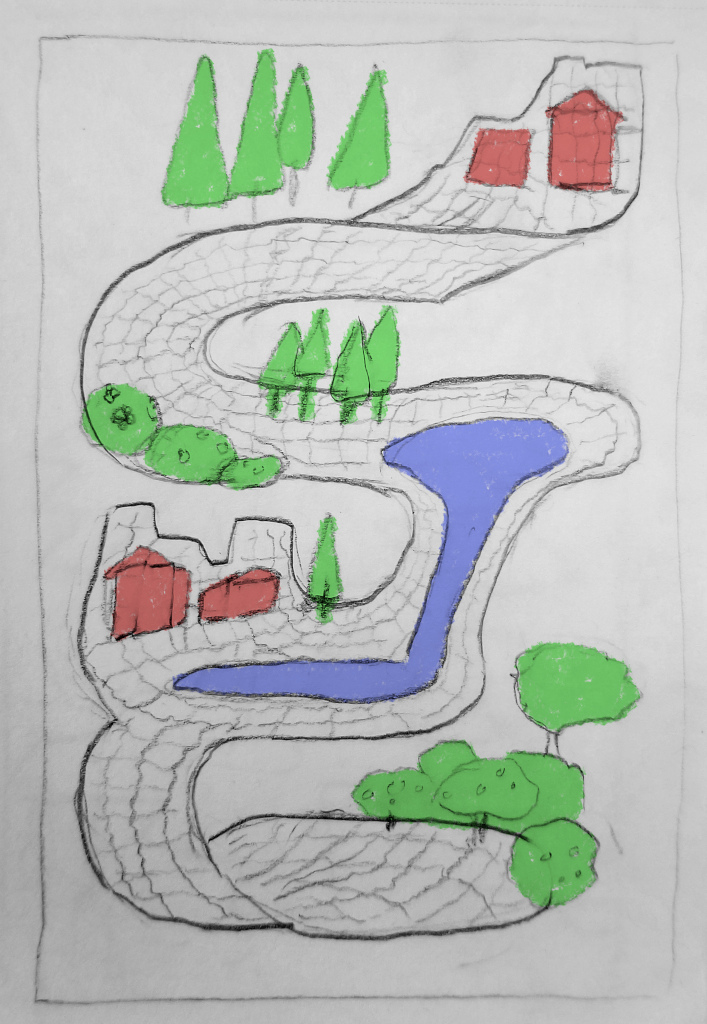

Being an environment artist on LOVESICK was a delightful and challenging experience. I was lucky enough to get to work off of concept art from the creative director/2D artist, which defined both the general look of each area of the game and the bold colorscapes. Filling in the details of a lot of the virtual spaces and objects was my job. I worked alongside a fellow environment artist, a character artist, the 2D artist, and many other talented folks. Two things provided the unique constraints in which I was creating models:

1) The limitations of building out large environments without overwhelming the processing power of a VR headset

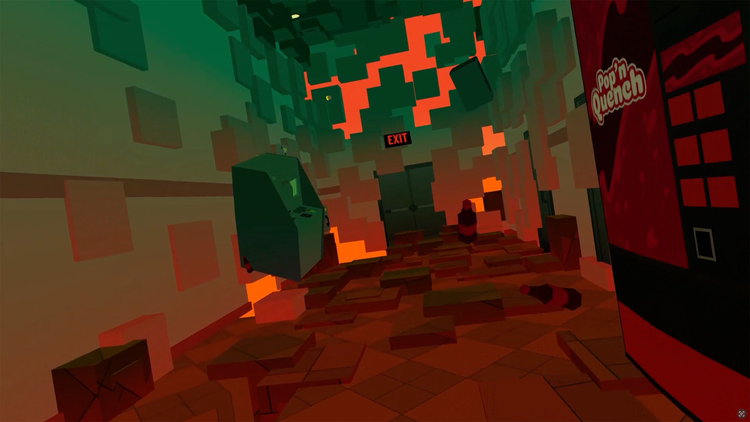

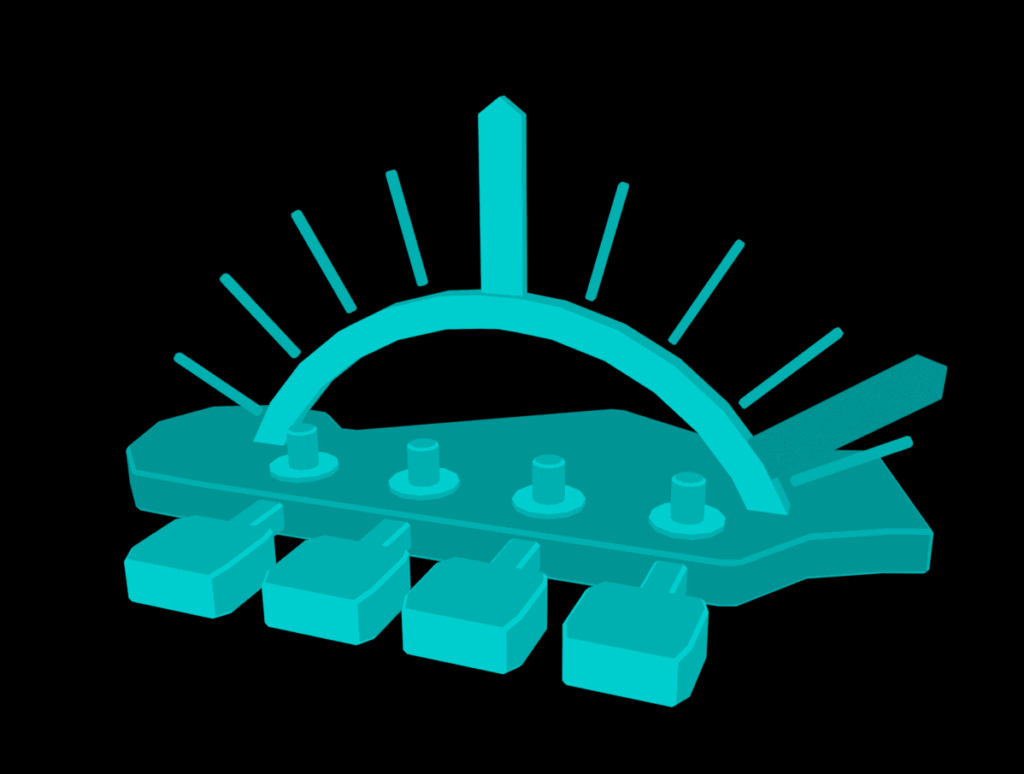

2) The stylized cel shader that was applied near-universally throughout the game.

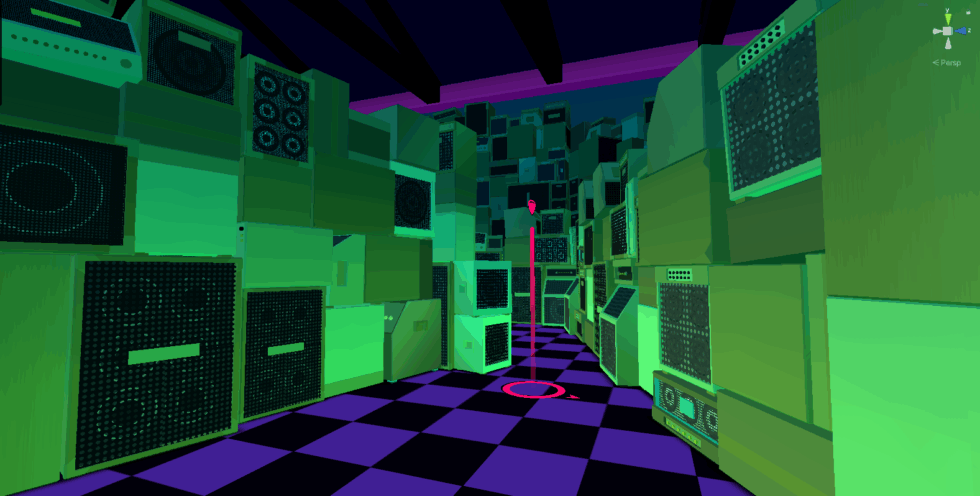

Challenge #1: Stylized But Legible

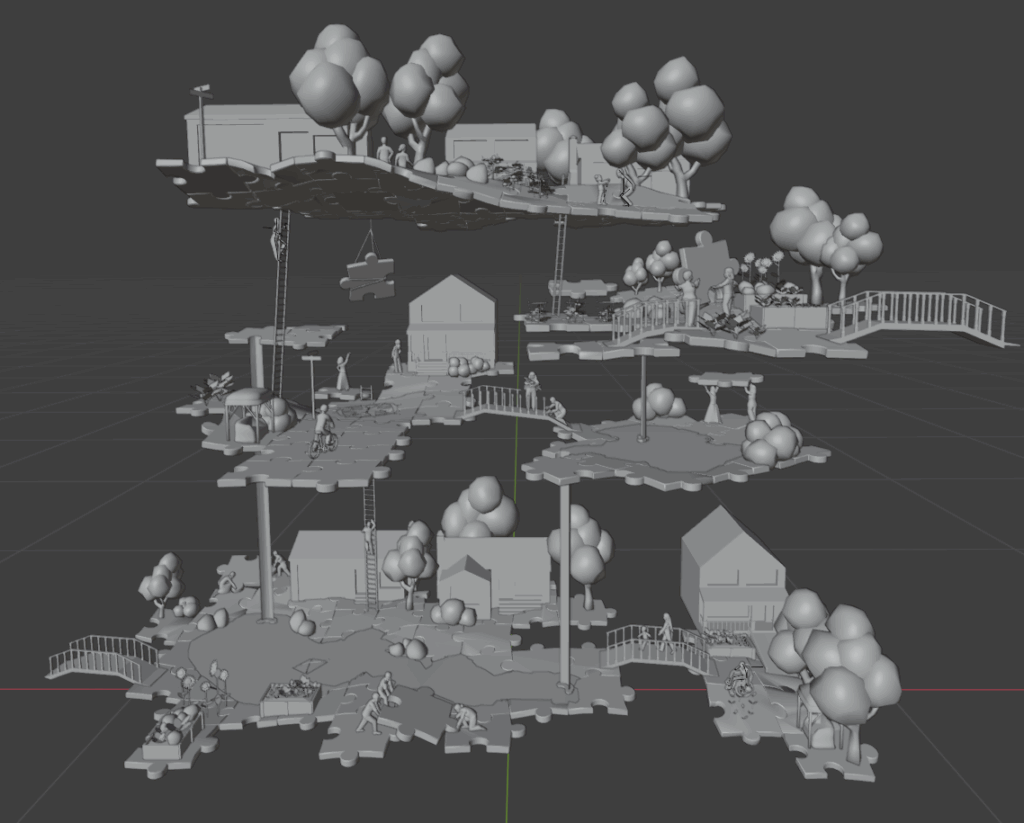

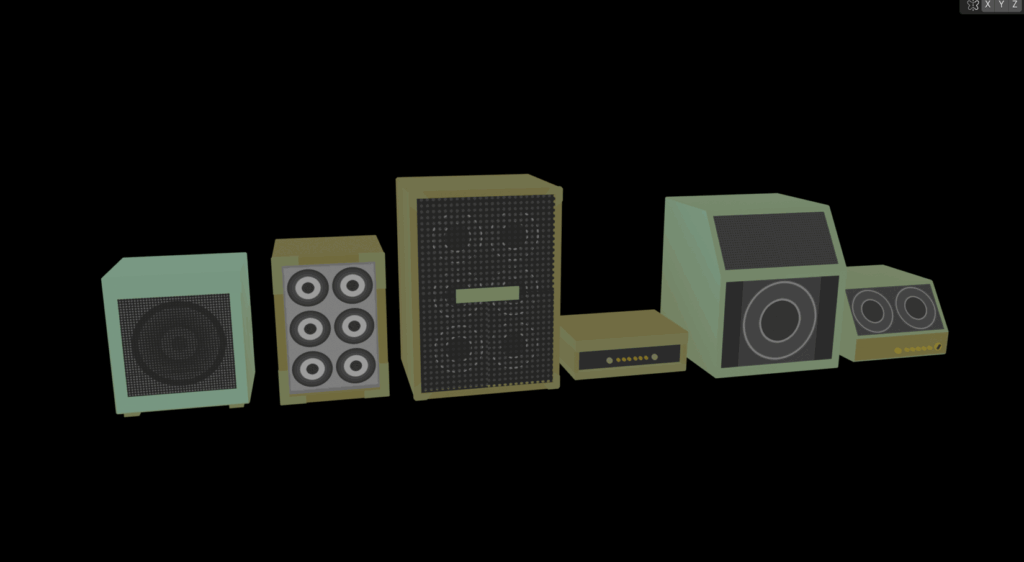

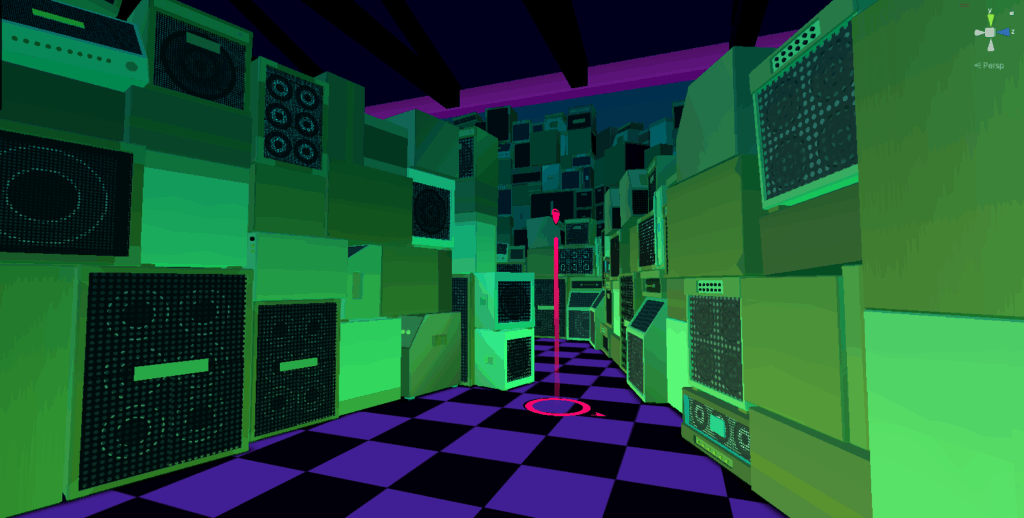

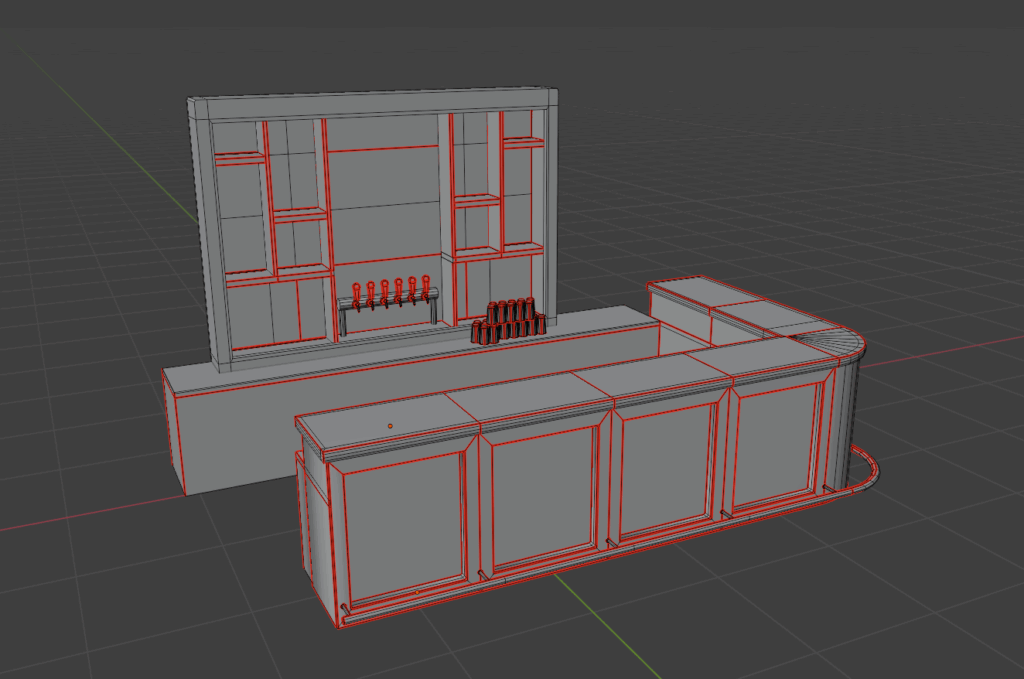

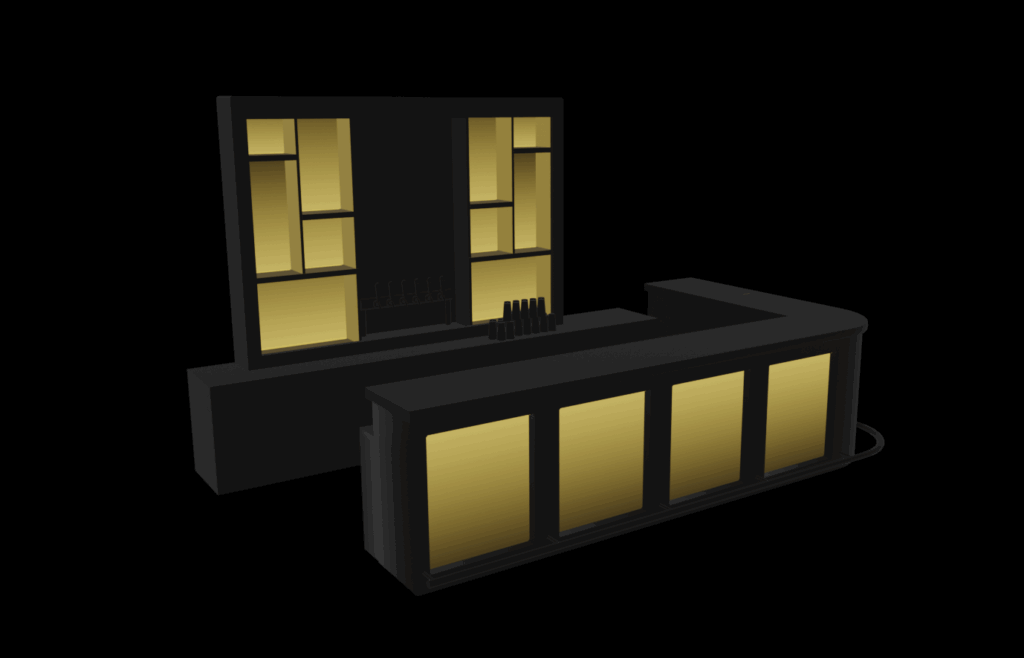

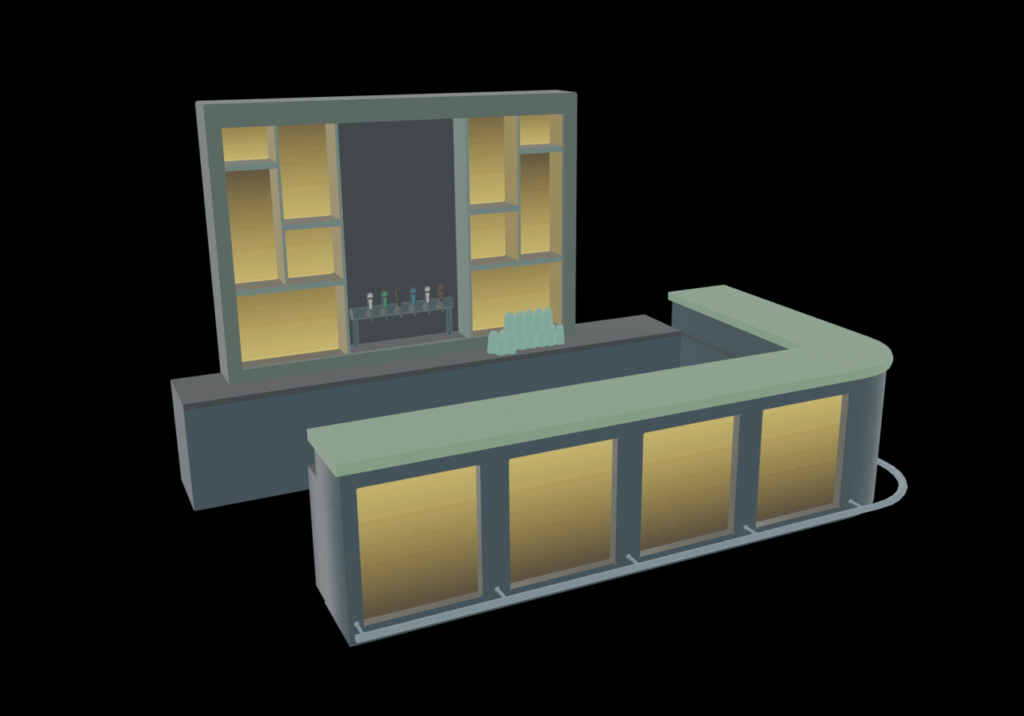

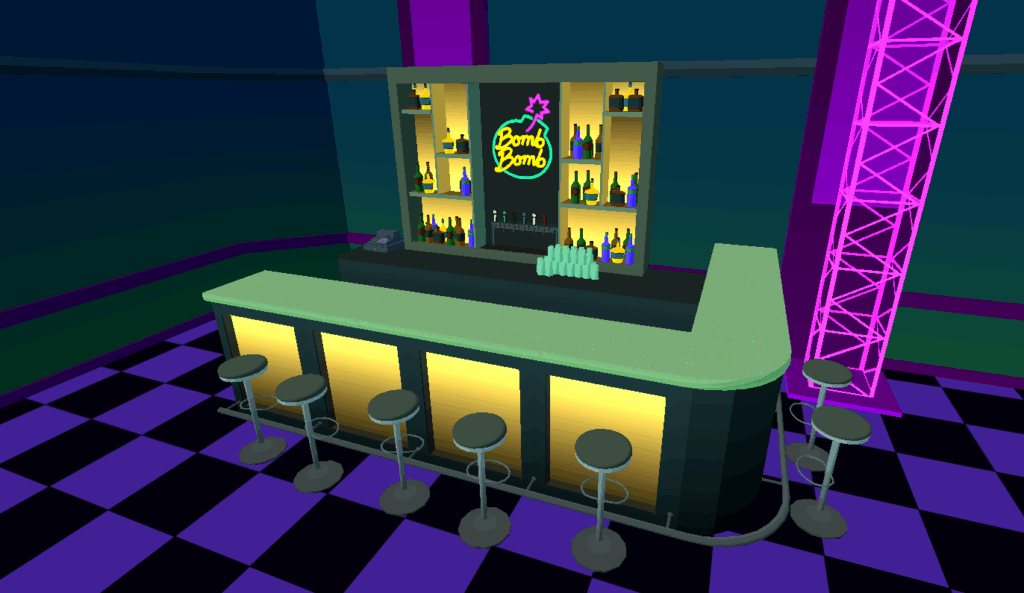

The cel shader defined the game’s aesthetic and allowed a lot of broad control over the colors, but it risked making objects look visually flat if I didn’t set the models up in a way that made them read clearly.

In some places, that meant adding highlights, lowlights, and small details to define the contours of objects. In other places that meant using patterning or decals to provide contrast and a sense of distance.

In other cases, it meant using a pseudo light map to paint an object to look like it was illuminated without there being an actual light source! (Light sources are very processor intensive in a game engine, so any opportunity to avoid using one is a good opportunity.) Our lead developer adapted the shader to make this approach work efficiently.

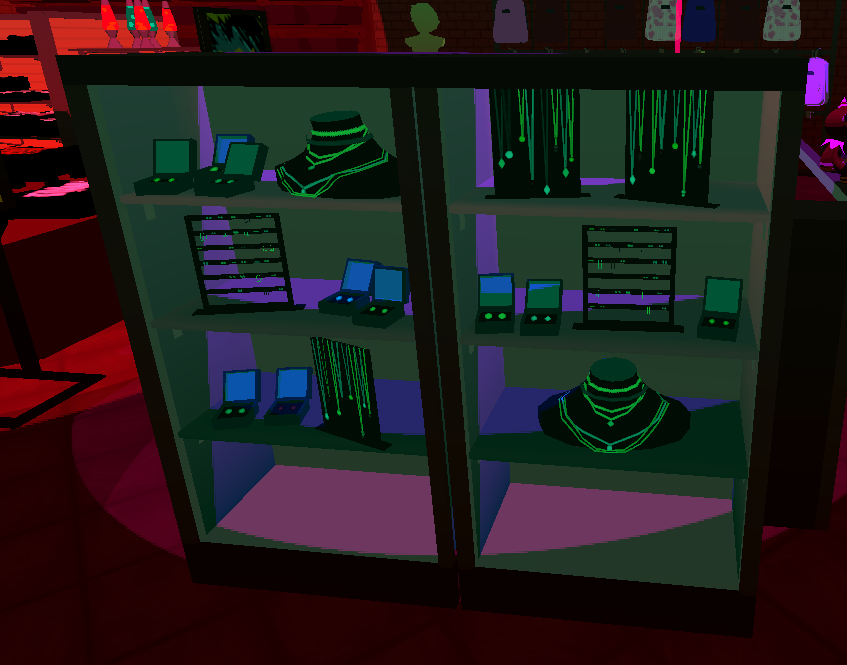

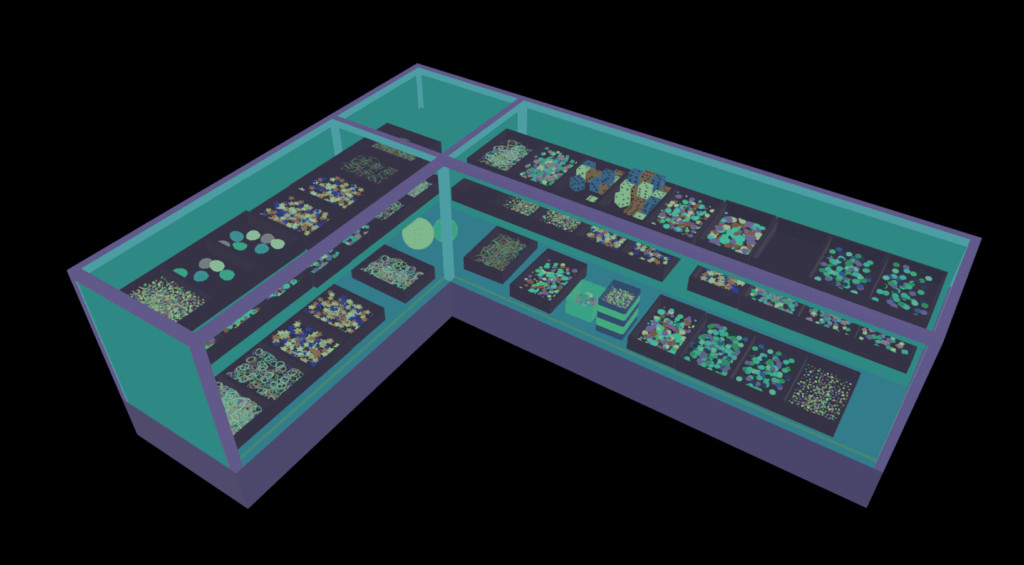

I also learned from the dev team that partially-transparent materials are very processor intensive, so we wanted to avoid those as well — to be efficient, things needed to be either fully opaque, or completely invisible. One of my particular favorite effects in this project was making “glass” display cases that fool the eye into thinking that there is semi-transparent glass, when there actually isn’t anything there. I did this by tinting everything inside the case slightly blue-green. When the color shift ends at a specific line, the viewer assumes that that’s where the glass is!

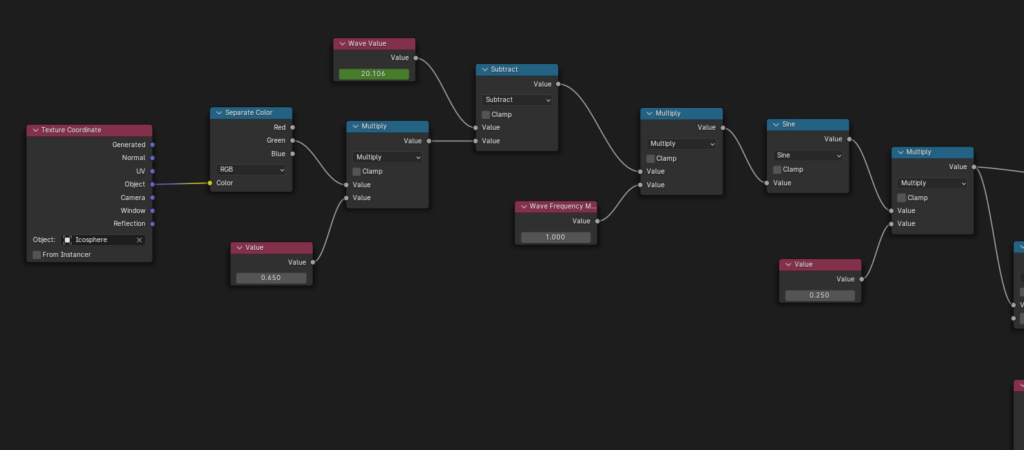

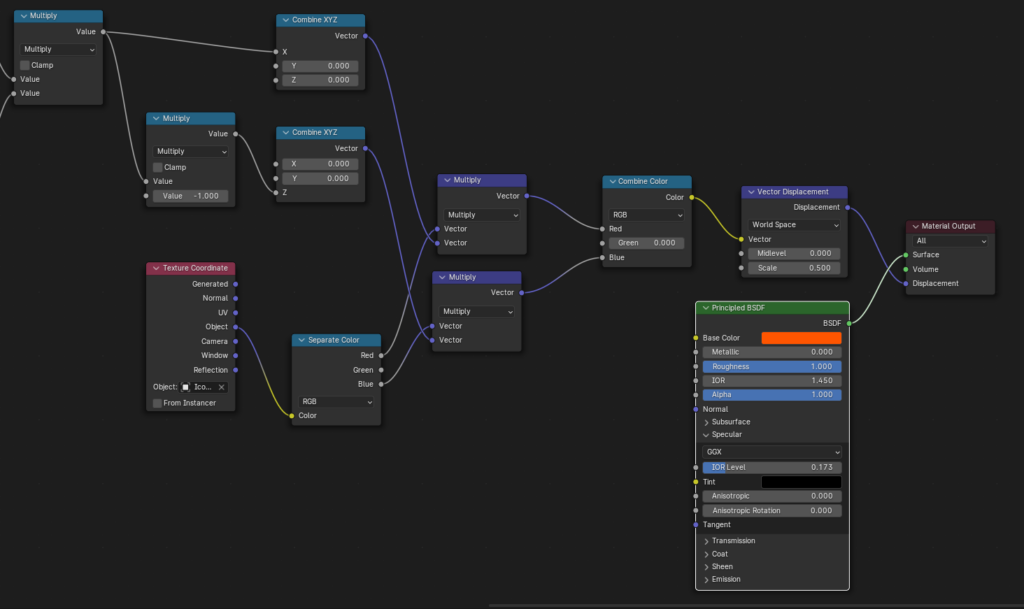

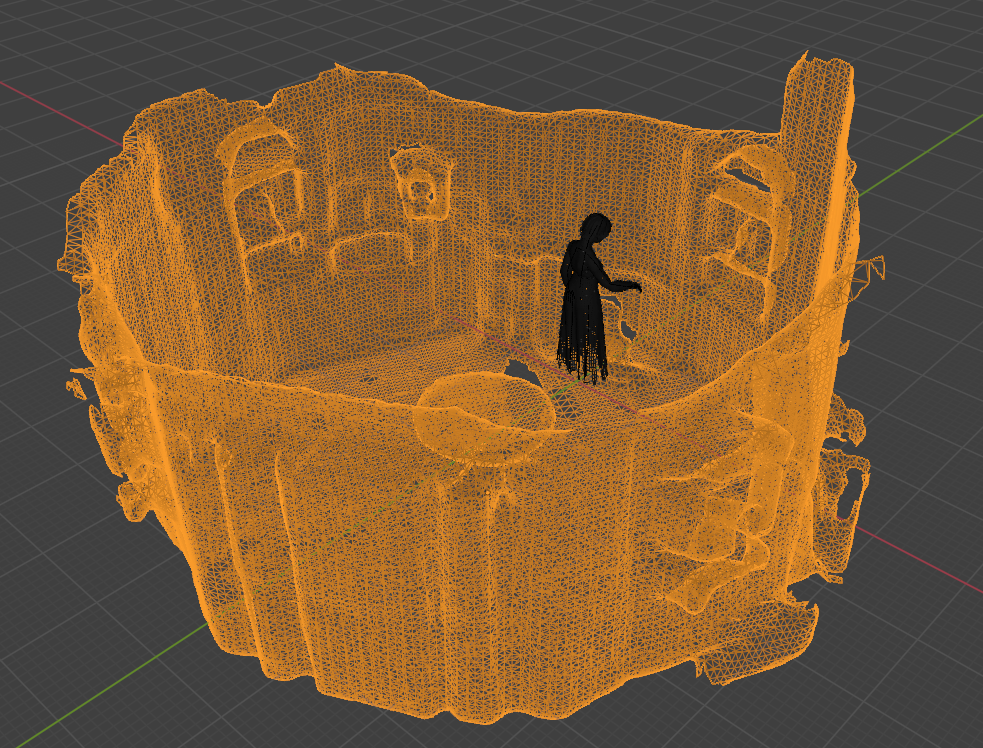

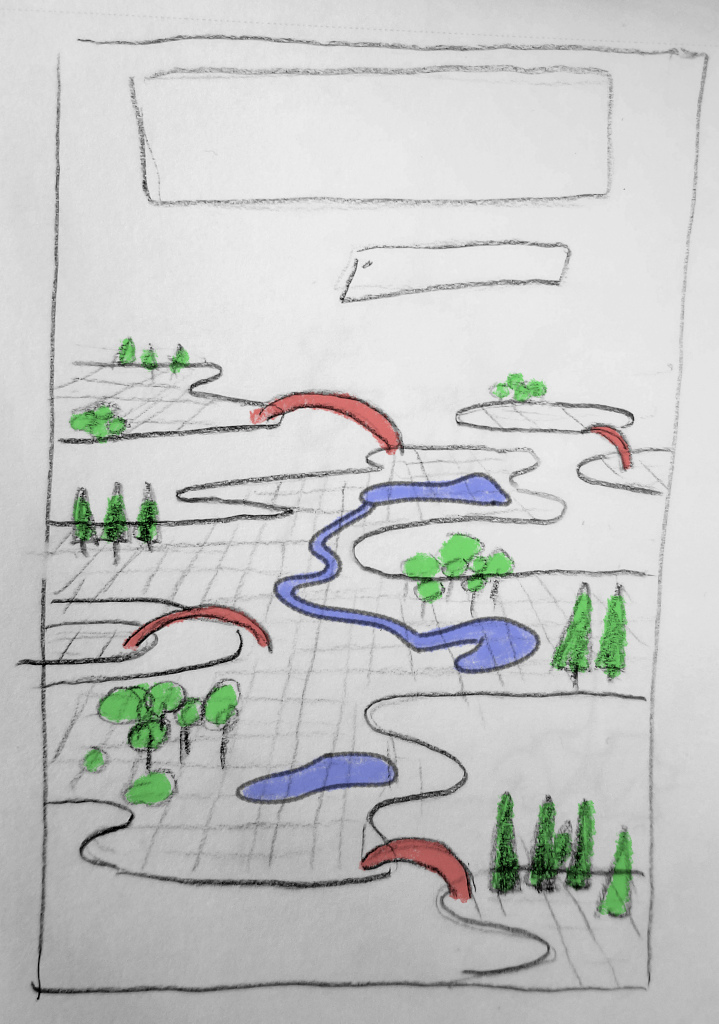

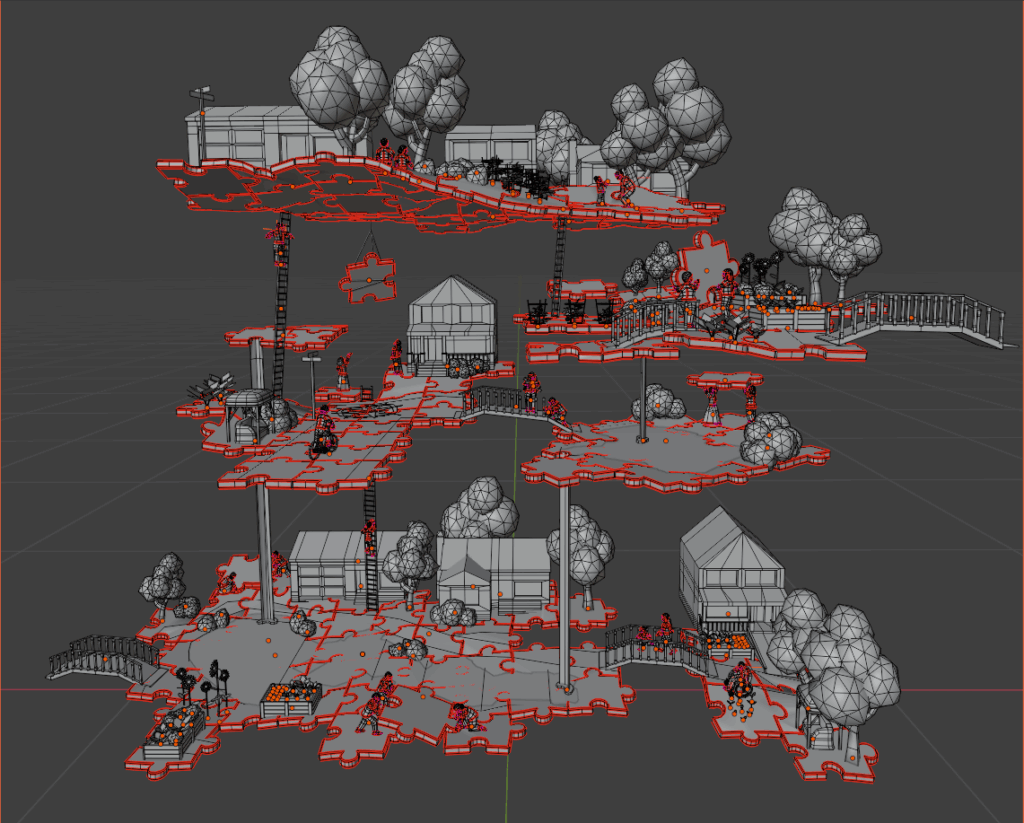

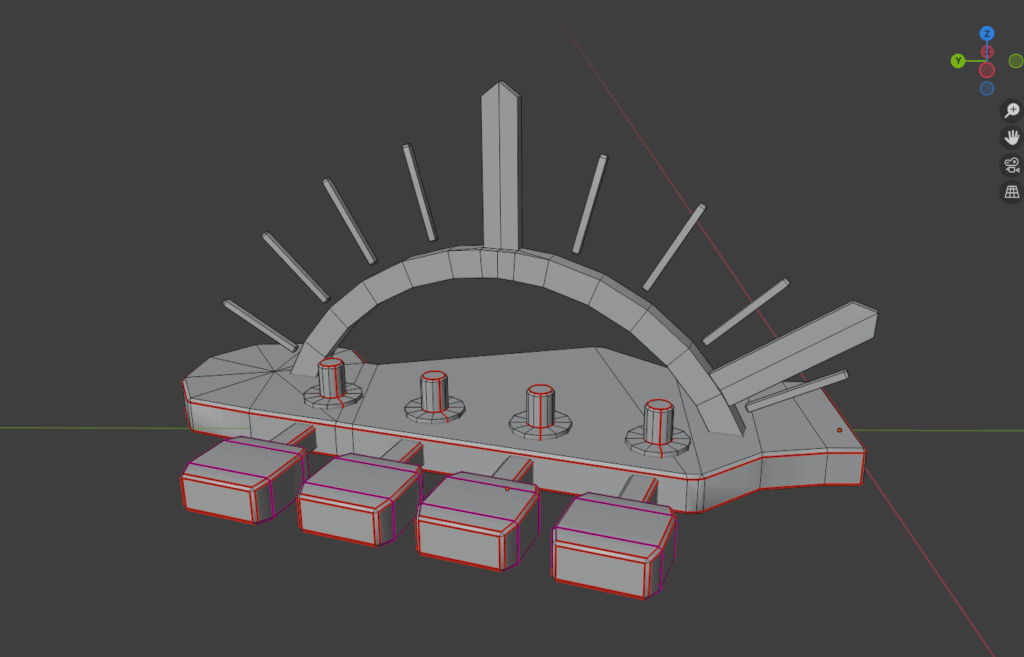

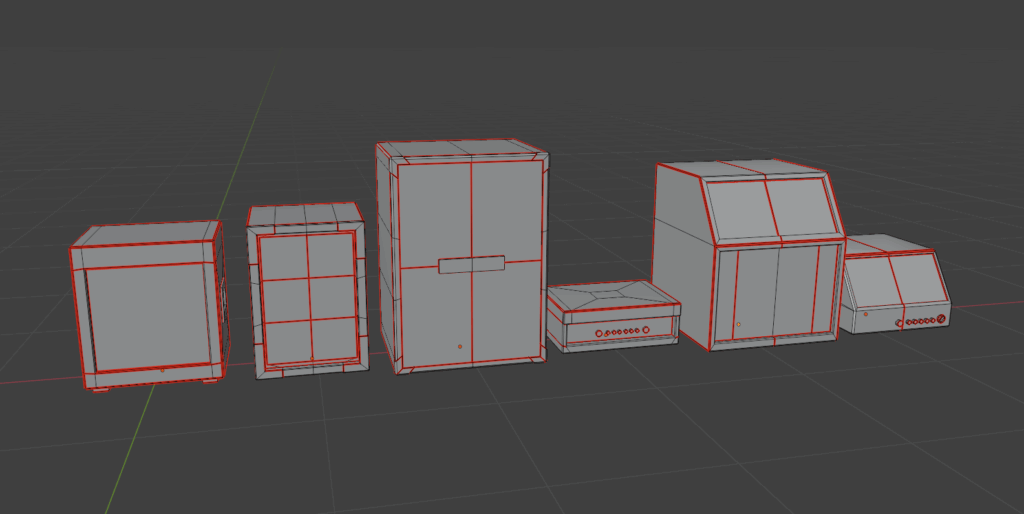

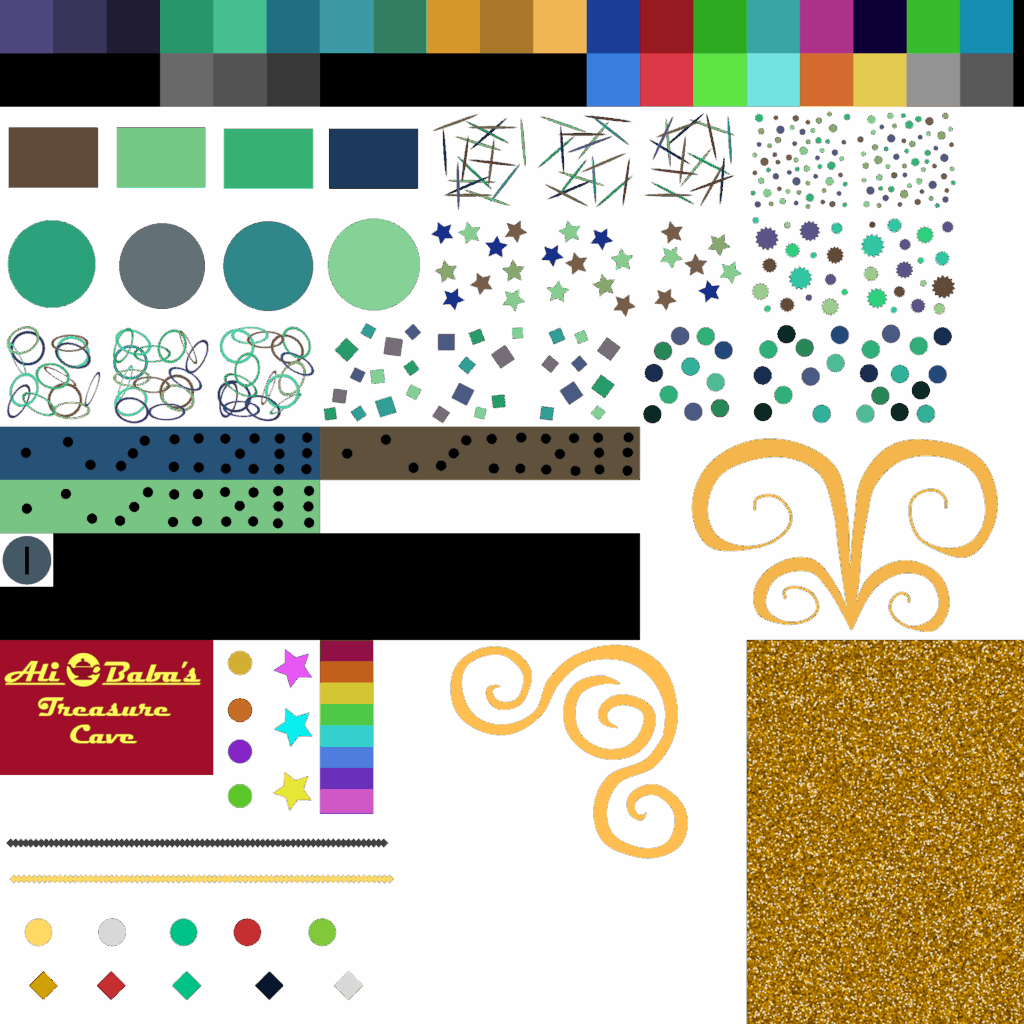

Challenge #2 Dense-Packed Textures, Minimum Vertices

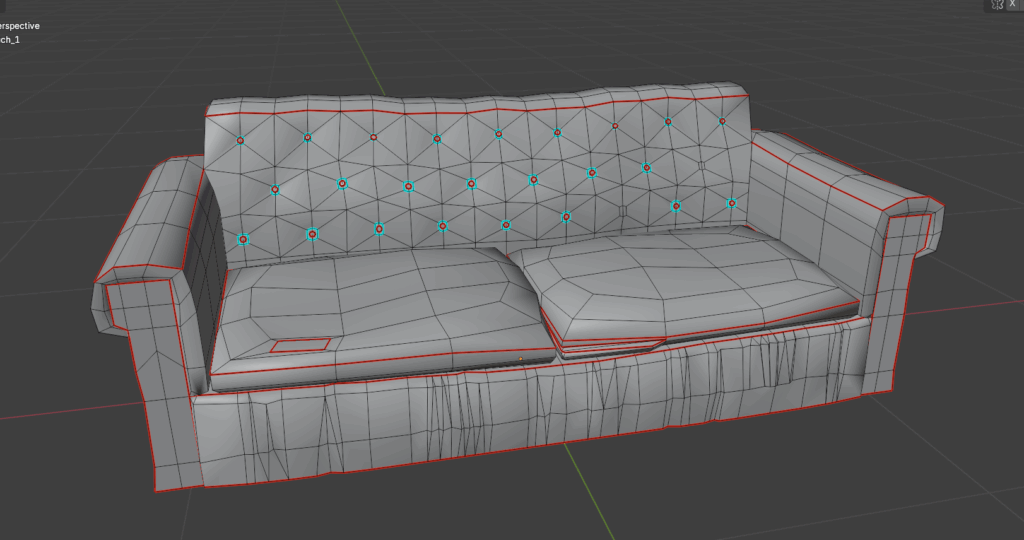

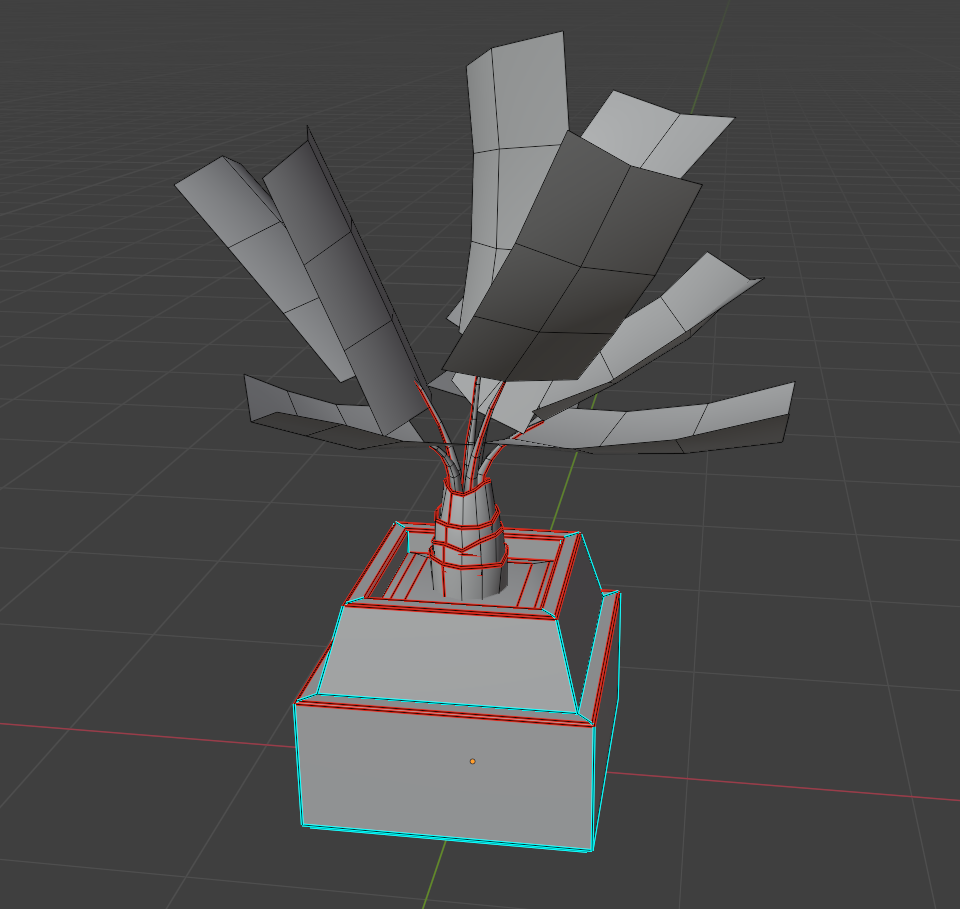

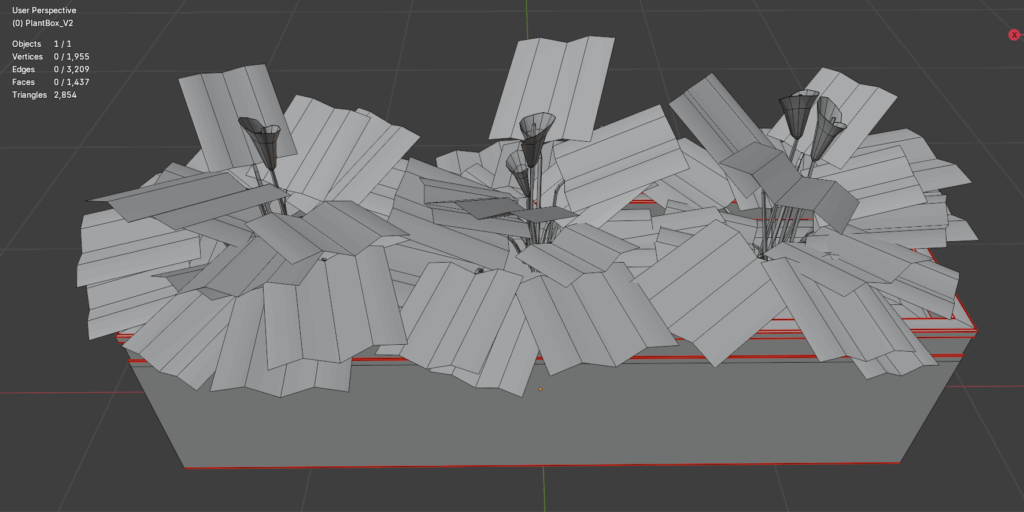

Modeling with minimal polygons was required for this project, since the game spaces were often quite busy and had a lot of small objects in them. But the director also wanted to avoid things looking too blocky. Getting the greatest possible visual mileage out of simple graphics was central to the project.

Unity engine can be made to process multiple objects as though they were one, provided they all have the same material, which increases efficiency and frame rate. In order to have the same material, they need to share the same texture map(s). With a little creativity, a whole lot of objects can be mapped onto one texture map — in fact, in some areas, a whole room might use only a small handful of materials, while each object still looks unique. This technique also makes it easier to do large-scale adjustments to the color scheme, since only a few materials need to be modified.

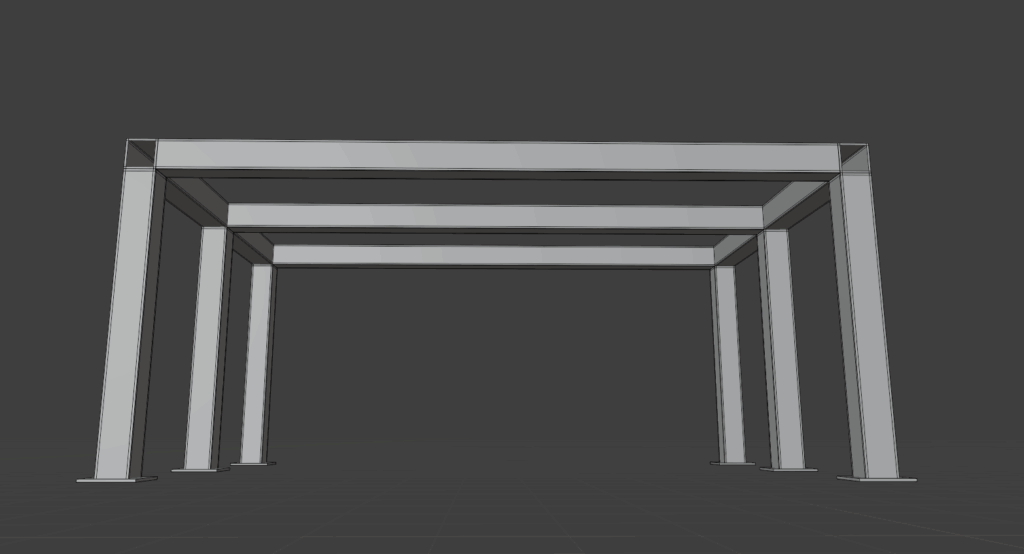

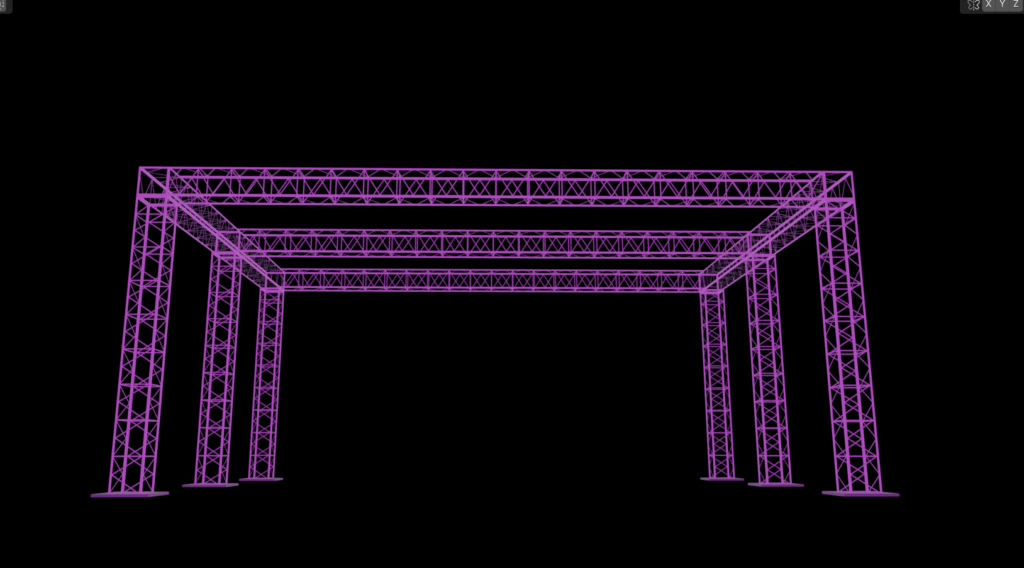

Some shapes, particularly repeating ones, can also be made using clipping maps, which make parts of an object visible and others invisible. I made use of this for things like scaffolding or plants, which does a lot to keep the models low-poly.

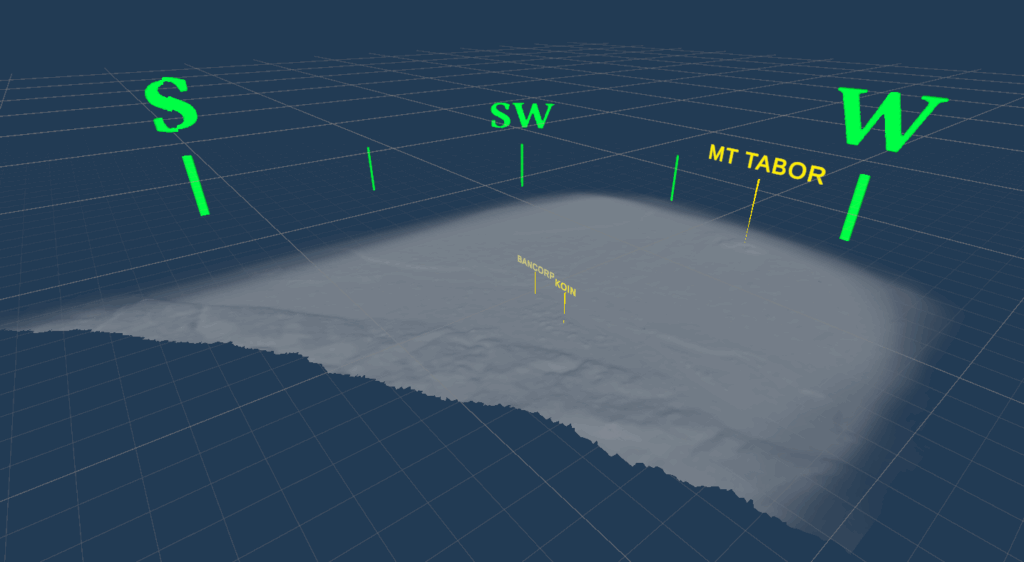

Process thoughts: The Spatial World

VR adds a new dimension to design — literally. I often find that viewing an asset on-screen while building it and viewing it in VR are very different experiences.

Even after working in VR for some years, it sometimes challenges my expectations. It makes me rethink size, color, brightness, and detail levels. Everything that is done in the 3D modeling software is amplified by the immersive nature of VR. Relative scale and space become vital, and even small animations become an injection of life into a digital world.

Sometimes the best approach is just to iterate – try something that seems about right, spend a little time with it in the headset, and then adjust, and repeat as needed.

More About the Game

You can read more about LOVESICK on the Rose City Games website here

And check out the official release trailer!